THEORY BUILDING IN

INDUSTRIAL AND ORGANIZATIONAL PSYCHOLOGY

Jane Webster

and

William H. Starbuck

Pages

93-138 in C. L. Cooper and I. T. Robertson (eds.), International Review of

Industrial and Organizational Psychology 1988; Wiley, 1988.

SUMMARY

I/O psychology has been progressing

slowly. This slowness arises partly from a three-way imbalance: a lack of

substantive consensus, insufficient use of theory to explain observations, and

excessive confidence in induction from empirical evidence. I/O psychologists

could accelerate progress by adopting and enforcing a substantive paradigm.

Staw (1984: 658) observed:

The micro side of

organizational behavior historically has not been strong on theory.

Organizational psychologists have been more concerned ‘with research

methodology, perhaps because of the emphasis upon measurement issues in

personnel selection and evaluation. As an example of this methodological bent,

the I/O Psychology division of the American Psychological Association, when

confronted recently with the task of improving the field’s research, formulated

the problem as one of deficiency in methodology rather than theory

construction.... It is now time to provide equal consideration to theory

formulation.

This chapter explores

the state of theory in I/O psychology and micro-Organizational Behavior (OB).[1]

The chapter argues that these fields have progressed very slowly, and that

progress has occurred so slowly partly because of a three-way imbalance: a lack

of theoretical consensus, inadequate attention to using theory to explain

observations, coupled with excessive confidence in induction from empirical

evidence. As a physicist, J. W. N. Sullivan (1928; quoted by Weber, 1982, p.

54), remarked: ‘It is much easier to make measurements than to know exactly

what you are measuring.’

Well-informed people

hold widely divergent opinions about the centrality and influence of theory.

Consider Dubin’s (1976, p. 23) observation that

managers use theories as moral justifications, that managers may endorse job

enlargement, for example, because it permits more complete delegation of

responsibilities, raises morale and commitment, induces greater effort, and

implies a moral imperative to seek enlarged jobs and increased

responsibilities. Have these consequences anything to do with theory? Job

enlargement is not a theory, but a category of action. Not only do these

actions produce diverse consequences, but the value of any single consequence

is frequently debatable. Is it exploitative to elicit greater effort, because

workers contribute more but receive no more pay? Or is it efficient, because

workers contribute more but receive no more pay? Or is it humane, because

workers enjoy their jobs more? Or is it uplifting, because work is virtuous and

laziness despicable? Nothing compels managers to use job enlargement; they

adopt it voluntarily. Theory only describes the probable consequences if they

do use it. Furthermore, there are numerous theories about work redesigns such

as job enlargement and job enrichment, so managers can choose the theories they

prefer to espouse. Some theories emphasize the consequences of work redesign

for job satisfaction; others highlight its consequences for efficiency, and

still others its effects on accident rates or workers’ health (Campion and Thayer, 1985).

We hold that theories do

make a difference, to non-scientists as well as to scientists, and that

theories often have powerful effects. Theories are not neutral descriptions of

facts. Both prospective and retrospective theories shape facts. Indeed, the

consequences of actions may depend more strongly on the actors’ theories than

on the overt actions. King’s (1974) field experiment illustrates this point. On

the surface, the study aimed at comparing two types of job redesign: a company

enlarged jobs in two plants, and began rotating jobs in two similar plants. But

the study had a 2 x 2 design. Their boss told two of the plant managers that

the redesigns ought to raise productivity but have no effects on industrial relations;

and he told the other two plant managers that the redesigns ought to improve

industrial relations and have no effects on productivity. The observed changes

in productivity and absenteeism matched these predictions: productivity rose

significantly while absenteeism remained stable in those two plants, and

absenteeism dropped while productivity remained constant in the other two

plants. Job rotation and job enlargement, however, yielded the same levels of

productivity and absenteeism. Thus, the differences in actual ways of working

produced no differences in productivity or absenteeism, but the different

rationales did induce different outcomes.

Theories shape facts by

guiding thinking. They tell people what to expect, where to look, what to

ignore, what actions are feasible, what values to hold. These expectations and

beliefs then influence actions and retrospective interpretations, perhaps

unconsciously (Rosenthal, 1966). Kuhn (1970) argued that scientific

collectivities develop consensus around coherent theoretical positions-

paradigms. Because paradigms serve as frameworks for interpreting evidence, for

legitimating findings, and for deciding what studies to conduct, they steer

research into paradigm-confirming channels, and so they reinforce themselves

and remain stable for long periods. For instance, in 1909, Finkelstein reported

in his doctoral dissertation that he had synthesized benzocyclobutene

(Jones, 1966). Finkelstein’s dissertation was rejected for publication because

chemists believed, at that time, such chemicals could not exist, and so his

finding had to be erroneous. Theorists elaborated the reasons for the

impossibility of these chemicals for another 46 years, until Finkelstein’s

thesis was accidentally discovered in 1955.

Although various

observers have argued that the physical sciences have stronger consensus about

paradigms than do the social sciences, the social science findings may be even

more strongly influenced by expectations and beliefs. Because these

expectations and beliefs do not win consensus, they may amplify the

inconsistencies across studies. Among others, Chapman and Chapman (1969),

Mahoney and DeMonbreun (1977) and Snyder (1981) have

presented evidence that people holding prior beliefs emphasize confirmatory

strategies of investigation, they rarely use disconfirmatory strategies, and

they discount disconfirming observations: these confirmatory strategies turn

theories into self-fulfilling prophecies in situations where investigators’

behaviors can elicit diverse responses or where investigators can interpret

their observations in many ways (Tweney et al., 1981). Mahoney (1977)

demonstrated that journal reviewers tend strongly to recommend publication of

manuscripts that confirm their beliefs and to give these manuscripts high

ratings for methodology, whereas reviewers tend strongly to recommend rejection

of manuscripts that contradict their beliefs and to give these manuscripts low

ratings for methodology. Faust (1984) extrapolated these ideas to theory

evaluation and to review articles, such as those in this volume, but he did not

take the obvious next step of gathering data to confirm his hypotheses.

Thus, theories may have

negative consequences. Ineffective theories sustain themselves and tend to

stabilize a science in a state of incompetence, just as effective theories may

suggest insightful experiments that make a science more powerful. Theories

about which scientists disagree foster divergent findings and incomparable

studies that claim to be comparable. So scientists could be better off with no

theories at all than with theories that lead them nowhere or in incompatible

directions. On the other hand, scientists may have to reach consensus on some

base-line theoretical propositions in order to evaluate adequately the effectiveness

of these base-line propositions and the effectiveness of newer theories that

build on these propositions. Consensus on base-line theoretical propositions,

even ones that are somewhat erroneous, may also be an essential prerequisite to

the accumulation of knowledge because such consensus leads scientists to view

their studies in a communal frame of reference (Kuhn, 1970). Thus, it is an

interesting question whether the existing theories or the existing degrees of

theoretical consensus have been aiding or impeding scientific progress in I/O

psychology.

Consequently and

paradoxically, this chapter addresses theory building empirically, and the

chapter’s outline matches the sequence in which we pose questions and seek

answers for them.

First we ask: How much

progress has occurred in I/O psychology? If theories are becoming more and more

effective over time, they should explain higher and higher percentages of

variance. Observing the effect sizes for some major variables, we surmise that

I/O theories have not been improving.

Second, hunting an

explanation for no progress or negative progress, we examine indicators of

paradigm consensus. To our surprise, I/O psychology does not look so different

from chemistry and physics, fields that are perceived as having high paradigm

consensus and as making rapid progress. However, physical science paradigms

embrace both substance and methodology, whereas I/O psychology paradigms

strongly emphasize methodology and pay little attention to substance.

Third, we hypothesize

that I/O psychology’s methodological emphasis is a response to a real problem,

the problem of detecting meaningful research findings against a background of

small, theoretically meaningless, but statistically significant relationships.

Correlations published in the Journal of Applied Psychology seem to

support this conjecture. Thus, I/O psychologists may be de-emphasizing

substance because they do not trust their inferences from empirical evidence.

In the final section, we

propose that I/O psychologists accelerate the field’s progress by adopting and

enforcing a substantive paradigm. We believe that I/O psychologists could

embrace some base-line theoretical propositions that are as sound as Newton’s

laws, and using base-line propositions would project findings into shared

perceptual frameworks that would reinforce the collective nature of research.

PROGRESS IN EXPLAINING VARIANCE

Theories may be evaluated in many ways.

Webb (l961) said good theories exhibit knowledge, skepticism and generalizability. Lave and March (1975) said good theories

are metaphors that embody truth, beauty and justice; whereas unattractive

theories are inaccurate, immoral or unaesthetic. Daft and Wiginton

(1979) said that influential theories provide metaphors, images and concepts that

shape scientists’ definitions of their worlds. McGuire (1983) noted that people

may appraise theories according to internal criteria, such as their logical

consistency, or according to external criteria, such as the statuses of their

authors. Miner (1984) tried to rate theories’ scientific validity and

usefulness in application. Landy and Vasey (1984) pointed out tradeoffs between parsimony and

elegance and between literal and figurative modeling.

Effect sizes measure

theories’ effectiveness in explaining empirical observations or predicting

them. Nelson et al. (1986) found that

psychologists’ confidence in research depends primarily on significance levels

and secondarily on effect sizes. But investigators can directly control

significance levels by making more or fewer observations, so effect sizes

afford more robust measures of effectiveness.

According to the usual

assumptions about empirical research, theoretical progress should produce

rising effect sizes-for example, correlations should get larger and larger over

time. Kaplan (1963: 351-5) identified eight ways in which explanations may be

open to further development; his arguments imply that theories can be improved

by:

1. taking account of more determining

factors,

2. spelling out the conditions under which

theories should be true,

3. making theories more accurate by

refining measures or by specifying more precisely the relations among

variables,

4. decomposing general categories into

more precise subclasses, or aggregating complementary subclasses into general

categories,

5. extending theories to more instances,

6. building up evidence for or against

theories’ assumptions or predictions,

7. embedding theories in theoretical

hierarchies, and

8. augmenting theories with explanations

for other variables or situations.

The first four of these actions should

increase effect sizes if the theories are fundamentally correct, but not if the

theories are incorrect. Unless it is combined with the first four actions,

action (5) might decrease effect sizes even for approximately correct theories.

Action (6) could produce low effect sizes if theories are incorrect.

Social scientists

commonly use coefficients of determination, r2, to measure effect

sizes. Some methodologists have been advocating that the absolute value of r

affords a more dependable metric than r2 in some instances (Ozer,

1985; Nelson et al., 1986). For the

purposes of this chapter, these distinctions make no difference because r2 and the absolute value

of r increase and decrease together. We do, however, want to recognize the

differences between positive and negative relationships, so we use r.

Of the nine effect

measures we use, six are bivariate correlations. One can argue that, to capture

the total import of a stream of research, one has to examine the simultaneous

effects of several independent variables. Various researchers have advocated

multivariate research as a solution to low correlations (Tinsley and Heesacker, 1984; Hackett and Guion,

1985). However, in practice, multivariate research in I/O psychology has not

fulfilled these expectations, and the articles reviewing I/O research have not

noted any dramatic results from the use of multivariate analyses. For instance,

McEvoy and Cascio (1985)

observed that the effect sizes for turnover models have remained small despite

research incorporating many more variables. One reason is that investigators

deal with simultaneous effects in more than one way: they can observe several

independent variables that are varying freely; they can control for moderating

variables statistically; and they can control for contingency variables by

selecting sites or subjects or situations. It is far from obvious that

multivariate correlations obtained in uncontrolled situations should be higher

than bivariate correlations obtained in controlled situations. Indeed, the

rather small gains yielded by multivariate analyses suggest that careful

selection and control of sites or subjects or situations may be much more

important than we have generally recognized.

Scientists’ own

characteristics afford another reason for measuring progress with bivariate

correlations. To be useful, scientific explanations have to be understandable

by scientists; and scientists nearly always describe their findings in

bivariate terms, or occasionally trivariate terms. Even those scientists who

advocate multivariate analyses most fervently fall back upon bivariate and

trivariate interpretations when they try to explain what their analyses really

mean. This brings to mind a practical lesson that Box and Draper (1969)

extracted from their efforts to use experiments to discover more effective ways

to run factories: Box and Draper concluded that practical experiments should

manipulate only two or three variables at a time because the people who

interpret the experimental findings have too much difficulty making sense of

interactions among four or more variables. Speaking directly of the inferences

drawn during scientific research, Faust (1984) too pointed out the difficulties

that scientists have in understanding four-way interactions (Meehl, 1954; Goldberg, 1970). He noted that the great

theoretical contributions to the physical sciences have been distinguished by

their parsimony and simplicity rather than by their articulation of complexity.

Thus, creating theories that psychologists themselves will find satisfying

probably requires the finding of strong relationships among two or three

variables.

To track progress in I/O

theory building, we gathered data on effect sizes for five variables that I/O

psychologists have often studied. Staw (1984)

identified four heavily researched variables: job satisfaction, absenteeism,

turnover and job performance. I/O psychologists also regard leadership as an

important topic: three of the five annual reviews of organizational behavior

have included it (Mitchell, 1979; Schneider, 1985; House and Singh, 1987).

Other evidence supports

the centrality of these five variables for I/O psychologists. De Meuse (1986) made a census of dependent variables in I/O

psychology, and identified job satisfaction as one of the most frequently used

measures; it had been the focus of over 3000 studies by 1976 (Locke, 1976).

Psychologists have correlated job satisfaction with numerous variables: Here,

we examine its correlations with job performance and with absenteeism.

Researchers have made job performance I/O psychology’s most important dependent

variable, and absenteeism has attracted research attention because of its costs

(Hackett and Guion, 1985). We look at correlations of

job satisfaction with absenteeism because researchers have viewed absenteeism

as a consequence of employees’ negative attitudes (Staw,

1984).

Investigators have

produced over 1000 studies on turnover (Steers and Mowday,

1981). Recent research falls into one of two categories: turnover as the

dependent variable when assessing a new work procedure, and correlations

between turnover and stated intentions to quit (Staw,

1984).

Although researchers

have correlated job performance with job satisfaction for over fifty years,

more consistent performance differences have emerged in studies of behavior

modification and goal setting (Staw, 1984). Miner

(1984) surveyed organizational scientists, who nominated behavior modification

and goal setting as the two of the most respected theories in the field.

Although these two theories overlap (Locke, 1977; Miner, 1980), they do have

somewhat different traditions, and so we present them separately here.

Next to job performance,

investigators have studied leadership most often (Mitchell, 1979; De Meuse, 1986). Leadership research may be divided roughly

into two groups: theories about the causes of leaders’ behaviors, and theories

about contingencies influencing the effectiveness of leadership styles.

Research outside these two groups has generated too few studies for us to trace

effect sizes over time (Van Fleet and Yukl, 1986).

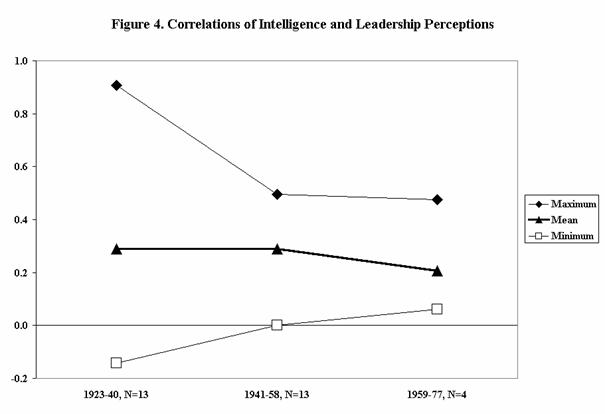

Many years ago,

psychologists seeking ways to identify effective leaders focused their research

on inherent traits. This work, however, turned up very weak relationships, and

no set of traits correlated consistently with leaders’ effectiveness. Traits

also offended Americans’ ideology espousing equality of opportunity (Van Fleet

and Yukl, 1986). Criticisms of trait approaches

directed research towards contingency theories (Lord et al., 1986). But these studies too turned up very weak

relationships, so renewed interest in traits has surfaced (Kenny and Zaccaro, 1983; Schneider, 1985). As an example of the trait

theories, we examine the correlations of intelligence with perceptions of

leadership, because these have demonstrated the highest and most consistent

relationships.

It is impossible to

summarize the effect sizes of contingency theories of leadership in general.

First, even though leadership theorists have proposed many contingency

theories, little research has resulted (Schriesheim

and Kerr, 1977), possibly because some of the contingency theories may be too

unclear to suggest definitive empirical studies (Van Fleet and Yukl, 1986). Second, different theories emphasize different

dependent variables (Campbell, 1977; Schriesheim and

Kerr, 1977; Bass, 1981). Therefore, one must focus on a particular contingency

theory. We examine Fiedler’s (1967) theory because Miner (1984) reported that organizational

scientists respect it highly.

Sources

A manual search of thirteen journals[2]

turned up recent review articles concerning the five variables of interest; Borgen et al.

(1985) identified several of these review articles as exemplary works. We took

data from articles that reported both the effect sizes and the publication

dates of individual studies. Since recent review articles did not cover older

studies well, we supplemented these data by examining older reviews, in books

as well as journals. In all, data came from the twelve sources listed in Table

1; these articles reviewed 261 studies.

|

Table 1 – Review

Article Sources |

|

|

Job satisfaction |

Iaffaldano

and Muchinsky (1985) |

|

|

Vroom (1964) |

|

|

Brayfield

and Crockett (1955) |

|

|

|

|

Absenteeism |

Hackett and Guion

(1985) |

|

|

Vroom (1964) |

|

|

Brayfield

and Crockett (1955) |

|

|

|

|

Turnover |

McEvoy

and Cascio (1985) |

|

|

Steel and Ovalle

(1984) |

|

|

|

|

Job Performance |

Hopkins and Sears (1982) |

|

|

Locke et al. (1980) |

|

|

|

|

Leadership |

Lord et al. (1986) |

|

|

Peters et al. (1985) |

|

|

Mann (1959) |

|

|

Stogdill

(1948) |

Measures

Each research study is represented by a

single measure of effect: for a study that measured the concepts in more than one

way, we averaged the reported effect sizes.

To trace changes in

effect sizes over time, we divided time into three equal periods. For instance,

for studies from 1944 to 1983, we compare the effect sizes for 1944-57, 1958-70

and 1971-83.

Results

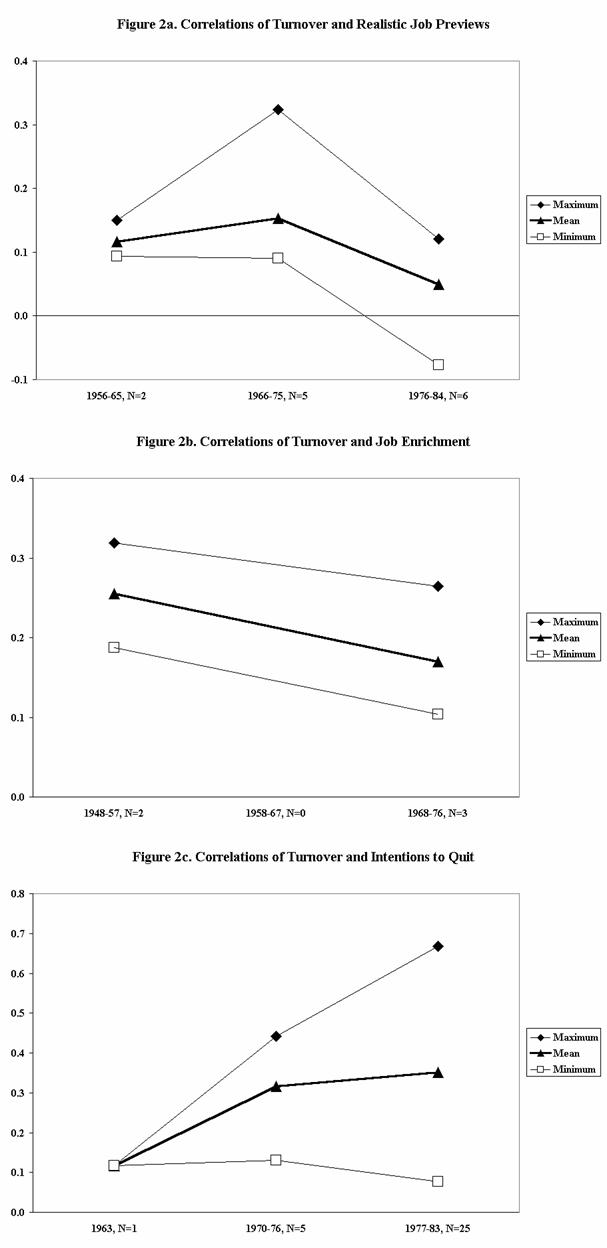

Figures 1-4 present the minimum, maximum

and average effect sizes for the five variables of interest. Three figures

(1(a), 3(b) and 4) seem to show that no progress has occurred over time; and

four figures (1(b), 2(a), 2(b) and 3(a)) seem to indicate that effect sizes

have gradually declined toward zero over time. The largest of these

correlations is only .22 in the most recent time period, so all of these

effects account for less than five per cent of the variance.

Moreover, four of these relationships

(2(a), 2(b), 3(a) and 3(b)) probably incorporate Hawthorne effects: They

measure the effects of interventions. Because all interventions should yield

some effects, the differential impacts of specific interventions would be less

than these effect measures suggest. That is, the effects of behavior

modification, for example, should not be compared with inaction, but compared

with those of an alternative intervention, such as goal setting.

Figure 2(c) is the only

one suggesting significant progress. Almost all of this progress, however,

occurred between the first two time periods: Because only one study was

conducted during the first of these periods, the apparent progress might be no

more than a statement about the characteristics of that single study. This

relationship is also stronger than the others, although not strong enough to

suggest a close causal relationship: The average correlation in the most recent

time period is .40. What this correlation says is that some of the people who

say in private that they intend to quit actually do quit.

Progress with respect to

Fiedler’s contingency theory of leadership is not graphed. Peters et al. (1985) computed the average

correlations (corrected for sampling error) of leadership effectiveness with

the predictions of this theory. The absolute values of the correlations

averaged .38 for the studies from which Fiedler derived this theory

(approximately 1954-65); but for the studies conducted to validate this theory

(approximately 1966-78), the absolute values of the correlations averaged .26.

Thus, these correlations too have declined toward zero over time.

I/O psychologists have

often argued that effects do not have to be absolutely large in order to

produce meaningful economic consequences. (Zedeck and

Cascjo, 1984; Schneider, 1985). For example, goal

setting produced an average performance improvement of 21.6 per cent in the

seventeen studies conducted from 1969 to 1979. If performance has a high

economic value and goal setting costs very little, then goal setting would be

well worth doing on the average. And because the smallest performance

improvement was 2 per cent, the risk that goal setting would actually reduce

performance seems very low (Cascjo, 1984; Schneider,

1985). For example, goal setting produced an average performance improvement of

21.6 per cent in the seventeen studies conducted from 1969 to 1979. If

performance has a high economic value and goal setting costs very little, then

goal setting would be well worth doing on the average. And because the smallest

performance improvement was 2 per cent, the risk that goal setting would

actually reduce performance seems very low.

This chapter, however,

concerns theoretical development; and so the economic benefits of relations

take secondary positions to identifying controllable moderators, to clarifying

causal links, and to increasing effect sizes. In terms of theoretical

development, it is striking that none of these effect sizes rose noticeably

after the first years. This may have happened for any of five reasons, or more

likely a combination of them:

(a)

Researchers may be clinging to incorrect theories despite

disconfirming evidence (Staw, 1976). This would be

more likely to happen where studies’ findings can be interpreted in diverse

ways. Absolutely small correlations nurture such equivocality, by making it

appear that random noise dominates any systematic relationships and that

undiscovered or uninteresting influences exert much more effect than the known

ones.

(b)

Researchers may be continuing to elaborate traditional methods of

information gathering after these stop generating additional knowledge. For

example, researchers developed very good leadership questionnaires during the

early 1950s. Perhaps these early questionnaires picked up all the information

about leadership that can be gathered via questionnaires. Thus, subsequent

questionnaires may not have represented robust improvements; they may merely

have mistaken sampling variations for generalities.

(c)

Most studies may fail to take advantage of the knowledge produced

by the very best studies. As a sole explanation, this would be unlikely even in

a world that does not reward exact replication, because research journals

receive wide distribution and researchers can easily read reports of others’

projects. However, retrospective interpretations of random variations may

obscure real knowledge in clouds of ad hoc rationalizations, so the consumers

of research may have difficulty distinguishing real knowledge from false.

Because we

wanted to examine as many studies as possible and studies of several kinds of

relationships, we did not attempt to evaluate the methodological qualities of

studies. Thus, we are using time as an implicit measure of improvement in

methodology. But time may be a poor indicator of methodological quality if new

studies do not learn much from the best studies. Reviewing studies of the

relationship between formal planning and profitability, Starbuck (1985)

remarked that the lowest correlations came in the studies that assessed

planning and profitability most carefully and that obtained data from the most

representative samples of firms.

(d)

Those studies obtaining the maximum effect sizes may do so for

idiosyncratic or unknown reasons, and thus produce no generalizable

knowledge. Researchers who provide too little information about studied sites,

subjects, or situations make it difficult for others to build upon their

findings (Orwin and Cordray,

1985); several authors have remarked that many studies report too little

information to support meta-analyses (Steel and Ovalle,

1984; Iaffaldano and Muchinsky,

1985; Scott and Taylor, 1985). The tendencies of people, including scientists,

to use confirmatory strategies mean that they attribute as much of the observed

phenomena as possible to the relationships they expect to see (Snyder, 1981;

Faust, 1984; Klayman and Ha, 1987). Very few studies

report correlations above .5, so almost all studies leave much scope for

misattribution and misinterpretation.

(e)

Humans’ characteristics and behaviors may actually change faster

than psychologists’ theories or measures improve. Stagner

(1982) argued that the context of I/O psychology has changed considerably over

the years: the economy has shifted from production to service industries, jobs

have evolved from heavy labor to cognitive functions, employees’ education

levels have risen, and legal requirements have multiplied and changed,

especially with respect to discrimination. For instance, Haire

et al. (1966) found that managers’

years of education correlate with their ideas about proper leadership, and

education alters subordinates’ concepts of proper leadership (Dreeben, 1968; Kunda, 1987). In

the US, median educational levels have risen considerably, from 9.3 years in

1950 to 12.6 years in 1985 (Bureau of the Census, 1987). Haire

et al. also attributed 25 per cent of

the variance in managers’ leadership beliefs to national differences: so, as

people move around, either between countries or within a large country, they

break down the differences between regions and create new beliefs that

intermingle beliefs that used to be distinct. Cummings and Schmidt (1972)

conjectured plausibly that beliefs about proper leadership vary with

industrialization; thus, the ongoing industrialization of the American

south-east and southwest and the concomitant deindustrialization of the

north-east are altering Americans’ responses to leadership questionnaires.

Whatever the reasons,

the theories of I/O psychology explain very small fractions of observed

phenomena, I/O psychology is making little positive progress, and it may

actually be making some negative progress. Are these the kinds of results that

science is supposed to produce?

PARADIGM CONSENSUS

Kuhn (1970) characterized scientific

progress as a sequence of cycles, in which occasional brief spurts of

innovation disrupt long periods of gradual incremental development. During the

periods of incremental development, researchers employ generally accepted

methods to explore the implications of widely accepted theories. The

researchers supposedly see themselves as contributing small but lasting

increments to accumulated stores of well-founded knowledge; they choose their

fields because they accept the existing methods, substantive beliefs and

values, and consequently they find satisfaction in incremental development

within the existing frames of reference. Kuhn used the term paradigm to denote

one of the models that guide such incremental developments. Paradigms, he

(1970, p. 10) said, provide ‘models from which spring particular coherent traditions

of scientific research’.

Thus, Kuhn defined

paradigms, not by their common properties, but by their common effects. His

book actually talks about 22 different kinds of paradigm (Masterman,

1970), which Kuhn placed into two broad categories: (a) a constellation of

beliefs, values and techniques shared by a specific scientific community; and

(b) an example of effective problem-solving that becomes an object of imitation

by a specific scientific community.

I/O psychologists have

traditionally focused on a particular set of variables: the nucleus of this set

would be those examined in the previous section-job satisfaction, absenteeism,

turnover, job performance and leadership. Also, we believe that substantial

majorities of I/O psychologists would agree with some base-line propositions

about human behavior. However, Campbell et

al. (1982) found a lack of consensus among American I/O psychologists

concerning substantive research goals. They asked them to suggest ‘the major

research needs that should occupy us during the next 10-15 years (p. 155): 105

respondents contributed 146 suggestions, of which 106 were unique. Campbell et al. (1982, p. 71) inferred: ‘The

field does not have very well worked out ideas about what it wants to do. There

was relatively little consensus about the relative importance of substantive

issues.’

Shared Beliefs, Values and Techniques

I/O psychologists do seem to have a

paradigm of type (a)-shared beliefs, values, and techniques, but it would seem

to be a methodological paradigm rather than a substantive one. For instance,

Watkins et al.’s (1986) analysis of

the 1984-85 citations in three I/O journals revealed that a methodologist,

Frank L. Schmidt, has been by far the most cited author. In this methodological

orientation, I/O psychology fits a general pattern: numerous authors have

remarked on psychology’s methodological emphasis (Deese,

1972; Koch, 1981; Sanford, 1982). For instance, Brackbill

and Korten (1970, p. 939) observed that psychological

‘reviewers tend to accept studies that are methodologically sound but

uninteresting, while rejecting research problems that are of significance for

science or society but for which faultless methodology can only be

approximated.’ Bakan (1974) called psychology

‘methodolatrous’. Contrasting psychology’s development with that of physics, Kendler (1984, p. 9) argued that ‘Psychological revolutions

have been primarily methodological in nature.’ Shames (1987, p. 264)

characterized psychology as ‘the most fastidiously committed, among the scientific

disciplines, to a socially dominated disciplinary matrix which is almost

exclusively centred on method.’

I/O psychologists not

only emphasize methodology, they exhibit strong consensus about methodology.

Specifically, I/O psychologists speak and act as if they believe they should

use questionnaires, emphasize statistical hypothesis tests, and raise the

validity and reliability of measures. Among others, Campbell (1982, p. 699)

expressed the opinion that 110 psychologists have been relying too much on ‘the

self-report questionnaire, statistical hypothesis testing, and multivariate

analytic methods at the expense of problem generation and sound measurement’.

As Campbell implied, talk about reliability and especially validity tends to be

lip-service: almost always, measurements of reliability are self-reflexive

facades and no direct means even exist to assess validity. I/O psychologists

are so enamored of statistical hypothesis tests that they often make them when

they are inappropriate, for instance when the data are not samples but entire

sub-populations, such as all the employees of one firm, or all of the members

of two departments. Webb et al.

(1966) deplored an overdependence on interviews and questionnaires, but I/O

psychologists use interviews much less often than questionnaires (Stone, 1978).

An emphasis on

methodology also characterizes the social sciences at large. Garvey et al. (1970) discovered that editorial

processes in the social sciences place greater emphasis on statistical procedures

and on methodology in general than do those in the physical sciences; and

Lindsey and Lindsey (1978) factor analysed social

science editors’ criteria for evaluating manuscripts and found that a

quantitative-methodological orientation arose as the first factor. Yet, other

social sciences may place somewhat less emphasis on methodology than does I/O

psychology. For instance, Kerr et al.

(1977) found little evidence that methodological criteria strongly influence

the editorial decisions by management and social science journals. According to

Kerr et al., the most influential

methodological criterion is statistical insignificance, and the editors of

three psychological journals express much stronger negative reactions to

insignificant findings than do editors of other journals.

Mitchell et al. (1985) surveyed 139 members of

the editorial boards of five journals that publish work related to

organizational behavior, and received responses from 99 editors. Table 2

summarizes some of these editors’ responses.[3]

The average editor said that ‘importance’ received more weight than other

criteria; that methodology and logic were given nearly equal weights, and that

presentation carried much less weight. When asked to assign weights among three

aspects of ‘importance’, most editors said that scientific contribution

received much more weight than practical utility or readers’ probable interest

in the topic. Also, they assigned nearly equal weights among three aspects of

methodology, but gave somewhat more weight to design.

Table 2 compares the

editors of two specialized I/O journals-Journal of Applied Psychology (JAP)

and Organizational Behavior and Human Decision Processes (OBHDP)-with

the editors of three more general management journals- Academy of Management

Journal (AMJ), Academy of Management Review (AMR) and Administrative

Science Quarterly (ASQ). Contrary to our expectations, the average editor

of the two I/O journals said that he or she allotted more weight to

‘importance’ and less weight to methodology than did the average editor of the

three management journals. It did not surprise us that the average editor of

the I/O journals gave less weight to the presentation than did the average

editor of the management journals. Among aspects of methodology, the average

I/O editor placed slightly more weight on design and less on measurement than

did the average management editor. When assessing ‘importance’, the average I/O

editor said that he or she gave distinctly less weight to readers’ probable

interest in a topic and more weight to practical utility than did the average

management editor. Thus, the editors of I/O journals may be using practical

utility to make up for I/O psychologists’ lack of consensus concerning

substantive research goals: if readers disagree about what is interesting, it

makes no sense to take account of their preferences (Campbell et al., 1982).

|

Table 2 – Review

Article Sources |

|||

|

|

|

|

|

|

Relative weights among

four general criteria |

|||

|

|

All

five journals |

JAP

and OBHDP |

AMJ,

AMR, and ASQ |

|

‘Importance’ |

35 |

38 |

34 |

|

Methodology |

26 |

25 |

27 |

|

Logic |

24 |

24 |

24 |

|

Presentation |

15 |

13 |

16 |

|

|

|

|

|

|

Relative weights among

three aspects of importance |

|||

|

|

All

five journals |

JAP

and OBHDP |

AMJ,

AMR, and ASQ |

|

Scientific contribution |

53 |

54 |

53 |

|

Practical utility |

28 |

31 |

26 |

|

Readers’ interest in topic |

19 |

14 |

21 |

|

|

|

|

|

|

Relative weights among

three aspects of methodology |

|||

|

|

All

five journals |

JAP

and OBHDP |

AMJ,

AMR, and ASQ |

|

Design |

38 |

39 |

37 |

|

Measurement |

31 |

30 |

32 |

|

Analysis |

31 |

31 |

31 |

|

|

|||

Editors’ stated priority

of ‘importance’ over methodology contrasts with the widespread perception that psychology

journals emphasize methodology at the expense of substantive importance. Does

this contrast imply that the actual behaviors of journal editors diverge from

their espoused values? Not necessarily. If nearly all of the manuscripts

submitted to journals use accepted methods, editors would have little need to

emphasize methodology. And if, like I/O psychologists in general, editors

disagree about the substantive goals of I/O research, editors’ efforts to

emphasize ‘importance’ would work at cross-purposes and have little net effect.

Furthermore, editors would have restricted opportunities to express their

opinions about what constitutes scientific contribution or practical utility if

most of the submitted manuscripts pursue traditional topics and few manuscripts

actually address ‘research problems that are of significance for science or

society’.

Objects of Imitation

I/O psychology may also have a few

methodological and substantive paradigms of type (b) examples that become

objects of imitation. For instance, Griffin (1987, pp. 82-3) observed:

The [Hackman

and Oldham] job characteristics theory was one of the most widely studied and

debated models in the entire field during the late 1970s. Perhaps the reasons

behind its widespread popularity are that it provided an academically sound

model, a packaged and easily used diagnostic instrument, a set of

practitioner-oriented implementation guidelines, and an initial body of

empirical support, all within a relatively narrow span of time. Interpretations

of the empirical research pertaining to the theory have ranged from inferring

positive to mixed to little support for its validity. (References omitted.)

Watkins et al. (1986) too found evidence of

interest in Hackman and Oldham’s (1980)

job-characteristics theory: five of the twelve articles that were most

frequently cited by I/O psychologists during 1984-85 were writings about this

theory, including Roberts and Glick’s (1981) critique of its validity. Although

its validity evokes controversy, Hackman and Oldham’s

theory seems to be the most prominent current model for imitation. As well, the

citation frequencies obtained by Watkins et

al. (1986), together with nominations of important theories collected by

Miner (1984), suggest that two additional theories attract considerable

admiration: Katz and Kahn’s (1978) open-systems theory and Locke’s (1968)

goal-setting theory. It is hard to see what is common among these three

theories that would explain their roles as paradigms; open-systems theory, in

particular, is much less operational than job-characteristics theory, and it is

more a point of view than a set of propositions that could be confirmed or

disconfirmed.

To evaluate more

concretely the paradigm consensus among I/O psychologists, we obtained several indicators

that others have claimed relate to paradigm consensus.

Measures

As indicators of paradigm consensus,

investigators have used: the ages of references, the percentages of references

to the same journal, the numbers of references per article, and the rejection

rates of journals.

Kuhn proposed that

paradigm consensus can be evaluated through literature references. He

hypothesized that during normal-science periods, references focus upon older,

seminal works; and so the numbers and types of references indicate

connectedness to previous research (Moravcsik and Murgesan, 1975). First, in a field with high paradigm

consensus, writers should cite the key works forming the basis for that field

(Small, 1980). Alternatively, a field with a high proportion of recent

references exhibits a high degree of updating, and so has little paradigm

consensus. One measure of this concept is the Citing Half-Life, which shows the

median age of the references in a journal. Second, referencing to the same

journal should reflect an interaction with research in the same domain, so

higher referencing to the same journal should imply higher paradigm consensus.

Third, since references reflect awareness of previous research, a field with

high paradigm consensus should have a high average number of references per

article (Summers, 1979).

Journals constitute the

accepted communication networks for transmitting knowledge in psychology

(Price, 1970; Pinski and Narin,

1979), and high paradigm consensus means agreement about what research deserves

publication. Zuckerman and Merton (1971) said that the humanities demonstrate

their pre-paradigm states through very high rejection rates by journals,

whereas the social sciences exhibit their low paradigm consensus through high

rejection rates, and the physical sciences show their high paradigm consensus

through low rejection rates. That is, paradigm consensus supposedly enables

physical scientists to predict reliably whether their manuscripts are likely to

be accepted for publication, and so they simply do not submit manuscripts that

have little chance of publication.

Results

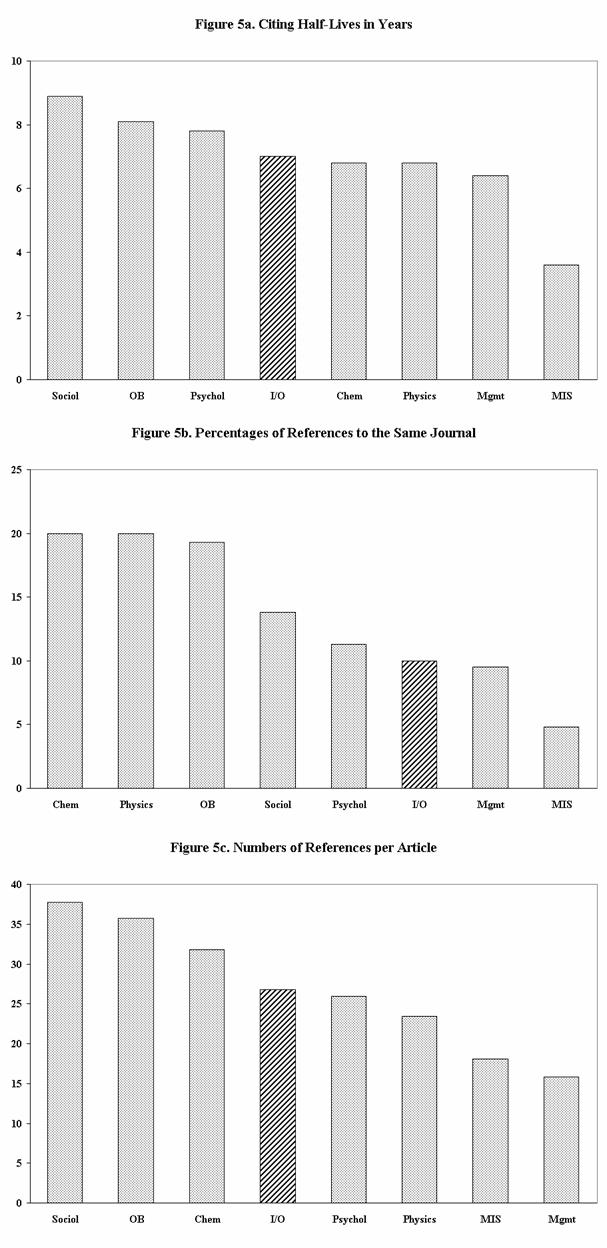

Based partly on work by Sharplin and Mabry (1985), Salancik

(1986) identified 24 ‘organizational social science journals’. He divided these

into five groups that cite one another frequently; the group that Salancik labeled Applied corresponds closely to I/O

psychology.[4]

Figure 5 compares these groups with respect to citing half-lives, references to

the same journal, and numbers of references per article. The SSCI Journal Citation

Reports (Social Science Citation Index, Garfield, 198 1-84b) provided these

three measures, although a few data were missing. We use four-year averages in

order to smooth the effects of changing editorial policies and of the

publication of seminal works (Blackburn and Mitchell, 1981). Figure 5 also

includes comparable data for three fields that do not qualify as

‘organizational social science’-chemistry, physics, and management information

systems (MIS). [5]

Data concerning chemistry, physics and MIS hold special interest because they

are generally believed to be making rapid progress; MIS may indeed be in a

pre-paradigm state.

Seven of the eight

groups of journals have average citing half-lives longer than five years, the

figure that Line and Sandison (1974) proposed as

signaling a high degree of updating. Only MIS journals have a citing half-life

below five years; this field is both quite new and changing with extreme

rapidity. I/O psychologists update references at the same pace as chemists and

physicists, and only slightly faster than other psychologists and OB

researchers.

Garfield (1972) found

that referencing to the same journal averages around 20 per cent across diverse

fields, and chemists and physicists match this average. All five groups of

‘organizational social science’ journals average below 20 per cent references

to the same journal, so these social scientists do not focus publications in

specific journals to the same degree as physical scientists, although the OB

researchers come close to the physical-science pattern. The I/O psychologists,

however, average less than 10 per cent references to the same journal, so they

focus publications even less than most social scientists. MIS again has a much

lower percentage than the other fields.

Years ago, Price (1965)

and Line and Sandison (1974) said 15-20 references

per article indicated strong interaction with previous research. Because the

numbers of references have been increasing in all fields (Summers, 1979),

strong interaction probably implies 25-35 references per article today. I/O

psychologists use numbers of references that fall within this range, and that

look much like the numbers for chemists, physicists and other psychologists.

We could not find

rejection rates for management, organizations and sociology journals, but

Jackson (1986) and the American Psychological Association (1986) published

rejection rates for psychology journals during 1985. In that year, I/O

psychology journals rejected 82.5 per cent of the manuscripts, which is near

the 84.3 per cent average for other psychology journals. By contrast, Zuckerman

and Merton (1971) reported that the rejection rates for chemistry and physics

journals were 31 and 24 per cent respectively. Similarly, Garvey et al. (1970) observed higher rejection

rates and higher rates of multiple rejections in the social sciences than in

the physical sciences. However, these differences in rejection rates may

reflect the funding and organization of research more than its quality or

substance: American physical scientists receive much more financial support

than do social scientists, most grants for physical science research go to

rather large teams, and physical scientists normally replicate each others’

findings. Thus, most physical science research is evaluated in the process of

awarding grants as well as in the editorial process, teams evaluate and revise

their research reports internally before submitting them to journals, and

researchers have incentives to replicate their own findings before they publish

them. The conciseness of physical science articles reduces the costs of

publishing them. Also, since the mid-1950s, physical science journals have

asked authors to pay voluntary page charges, and authors have

characteristically drawn upon research grants to pay these charges.

Peters and Ceci (1982) demonstrated for psychology in general that a

lack of substantive consensus shows up in review criteria. They chose twelve

articles that had been published in psychology journals, changed the authors’

names, and resubmitted the articles to the same journals that had published

them: The resubmissions were evaluated by 38 reviewers. Eight per cent of the

reviewers detected that they had received resubmissions, which terminated

review of three of the articles. The remaining nine articles completed the

review process, and eight of these were rejected. The reviewers stated mainly

methodological reasons rather than substantive ones for rejecting articles, but

Mahoney’s (1977) study suggests that reviewers use methodological reasons to

justify rejection of manuscripts that violate the reviewers’ substantive

beliefs.

Figure 6 graphs changes

in four indicators from 1957 to 1984 for the Journal of Applied Psychology and,

where possible, for other I/O psychology journals.[6]

Two of the indicators in Figure 6 have remained quite constant; one indicator

has risen noticeably; and one has dropped noticeably. According to the writers

on paradigm consensus, all four of these indicators should rise

as consensus increases. If these

indicators actually do measure paradigm consensus, I/O psychology has not been

developing distinctly more paradigm consensus over the last three decades.

Overall, the foregoing

indicators imply that I/O psychology looks much like management, sociology, and

other areas of psychology. In two dimensions- citing half-lives and references

per article-I/O psychology also resembles chemistry and physics, fields that

are usually upheld as examples of paradigm consensus (Lodahl

and Gordon, 1972). I/O psychology differs from chemistry and physics in

references to the same journal and in rejection rates, but the latter

difference is partly, perhaps mainly, a result of government policy. Hedges

(1987) found no substantial differences between physics and psychology in the

consistency of results across studies, and Knorr-Cetina’s

(1981) study suggests that research in chemistry incorporates the same kinds of

uncertainties, arbitrary decisions and interpretations, social influences, and

unproductive tangents that mark research in psychology.

Certainly, these

indicators do not reveal dramatic differences between I/O psychology and

chemistry or physics. However, these indicators make no distinctions between

substantive and methodological paradigms. The writings on paradigms cite

examples from the physical sciences that are substantive at least as often as

they are methodological; that is, the examples focus upon Newton’s laws or

phlogiston or evolution, as well as on titration or dropping objects from the

Tower of Pisa. Though far from a representative sample, this suggests that

physical scientists place more emphasis on substantive paradigms than I/O

psychologists do; but since I/O psychology seems to be roughly as paradigmatic

as chemistry and physics, this in turn suggests that I/O psychologists place

more emphasis on methodological paradigms than physical scientists do.

Perhaps I/O

psychologists tend to de-emphasize substantive paradigms and to emphasize

methodological ones because they put strong emphasis on trying to discover

relationships by induction. But can analyses of empirical evidence produce

substantive paradigms where no such paradigms already exist?

INDUCING RELATIONSHIPS FROM OBSERVATIONS

Our colleague Art Brief has been heard to

proclaim, ‘Everything correlates .1 with everything else.’ Suppose, for the

sake of argument, that this were so. Then all observed correlations would

deviate from the null hypothesis of a correlation less than zero, and a sample

of 272 or more would produce statistical significance at the .05 level with a

one-tailed test. If researchers would make sure that their sample sizes exceed

272, all observed correlations would be significantly greater than zero.

Psychologists would be inundated with small, but statistically significant, correlations.

In fact, psychologists

could inundate themselves with small, statistically significant correlations

even if Art Brief is wrong. By making enough observations, researchers can be

certain of rejecting any point null hypothesis that defines an infinitesimal

point on a continuum, such as the, hypothesis that two sample means are exactly

equal, as well as the hypothesis that a correlation is exactly zero. If a point

hypothesis is not immediately rejected, the researcher need only gather more

data. If an observed correlation is .04, a researcher would have to make 2402

observations to achieve significance at the .05 level with a two-tailed test;

and if the observed correlation is .2, the researcher will need just 97

observations.

Induction requires distinguishing

meaningful relationships (signals) against an obscuring background of

confounding relationships (noise). The background of weak and meaningless or

substantively secondary correlations may not have an average value of zero and

may have a variance greater than that assumed by statistical tests. Indeed, we

hypothesize that the distributions of correlation coefficients that researchers

actually encounter diverge quite a bit from the distributions assumed by

statistical tests, and that the background relationships have roughly the same

order of magnitude as the meaningful ones, partly because researchers’

nonrandom behaviors construct meaningless background relationships. These

meaningless relationships make induction untrustworthy.

In many tasks, people

can distinguish weak signals against rather strong background noise. The reason

is that both the signals and the background noise match familiar patterns.

People have trouble making such distinctions where signals and noise look much

alike or where signals and noise have unfamiliar characteristics. Psychological

research has the latter characteristics. The activity is called research

because its outputs are unknown; and the signals and noise look a lot alike in

that both have systematic components and both contain components that vary

erratically. Therefore, researchers rely upon statistical techniques to make

these distinctions. But these techniques assume: (a) that the so-called random

errors really do cancel each other out so that their average values are close

to zero; and (b) that the so-called random errors in different variables are

uncorrelated. These are very strong assumptions because they presume that the

researchers’ hypotheses encompass absolutely all of the systematic effects in

the data, including effects that the researchers have not foreseen or measured.

When these assumptions are not met, the statistical techniques tend to mistake

noise for signal, and to attribute more importance to the researchers’

hypotheses than they deserve. It requires very little in the way of systematic

‘errors’ to distort or confound correlations as small as those I/O

psychologists usually study.

One reason to expect

confounding background relationships is that a few broad characteristics of people

and social systems pervade psychological data. One such characteristic is

intelligence: Intelligence correlates with many other characteristics and

behaviors, such as leadership, job satisfaction, job performance, social class,

income, education and geographic location during childhood. These correlates of

intelligence tend to correlate with each other, independently of any direct

causal relations among them, because of their common relation to intelligence.

Other broad characteristics that correlate with many variables include sex,

age, social class, education, group or organizational size, and geographic

location.

A group of related

organization-theory studies illustrates how broad characteristics may mislead

researchers. In 1965, Woodward hypothesized that organizations employing

different technologies adopt different structures, and she presented some data

supporting this view. There followed many studies that found correlations

between various measures of organization-level technology and measures of

organizational structure. Researchers devoted considerable effort to refining

the measures of technology and structure and to exploring variations on this

general theme. After some fifteen years of research, Gerwin

(1981) pulled together all the diverse findings: Although a variety of

significant correlations had been observed, virtually all of them differed

insignificantly from zero when viewed as partial correlations with

organizational size controlled.

Researchers’ control is

a second reason to expect confounding background relationships. Researchers

often aggregate numerous items into composite variables; and the researchers

themselves decide (possibly indirectly via a technique such as factor analysis)

which items to include in a specific variable and what weights to give to

different items. By including in two composite variables the same items or

items that differ quite superficially from each other, researchers generate

controllable but substantively meaningless correlations between the composites.

Obviously, if two composite variables incorporate many very similar items, the

two composites will be highly correlated. In a very real sense, the

correlations between composite variables lie entirely within the researchers

control; researchers can construct these composites such that they correlate

strongly or weakly, and so the ‘observed’ correlations convey more information

about the researchers’ beliefs than about the situations that the researchers

claim to have observed.

The renowned Aston

studies show how researchers’ decisions may determine their findings (Starbuck,

1981). The Aston researchers made 1000-2000 measurements of each organization,

and then aggregated these into about 50 composite variables. One of the

studies’ main findings was that four of these composite variables-functional

specialization, role specialization, standardization and

formalization-correlate strongly: The first Aston study found correlations

ranging from .57 to .87 among these variables. However, these variables look a lot

alike when one looks into their compositions: Functional specialization and

role specialization were defined so that they had to correlate positively, and

so that a high correlation between them indicated that the researchers observed

organizations having different numbers of specialities. Standardization

measured the presence of these same specialities, but did so by noting the

existence of documents; and formalization too was measured by the presence of

documents, frequently the same documents that determined standardization. Thus,

the strong positive correlations were direct consequences of the researchers’

decisions about how to construct the variables.

Focused sampling is a

third reason to anticipate confounding background relationships. So-called samples

are frequently not random, and many of them are complete sub-populations. If,

for example, a study obtains data from every employee in a single firm, the

number of employees should not be a sample size for the purposes of statistical

tests: For comparisons among these employees, complete sub-populations have

been observed, the allocations of specific employees to these sub-populations

are not random but systematic, and statistical tests are inappropriate. For

extrapolation of findings about these employees to those in other firms, the

sample size is one firm. This firm, however, is unlikely to have been selected

by a random process from a clearly defined sampling frame and it may possess

various distinctive characteristics that make it a poor basis for generalization

- such as its willingness to allow psychologists entry, or its geographic

location, or its unique history.

These are not

unimportant quibbles about the niceties of sampling. Study after study has

turned up evidence that people who live close together, who work together, or

who socialize together tend to have more attitudes, beliefs, and behaviors in

common than do people who are far apart physically and socially. That is,

socialization and interaction create distinctive sub-populations. Findings

about any one of these sub-populations probably do not extrapolate to others

that lie far away or that have quite dissimilar histories or that live during

different ages. It would be surprising if the blue-collar workers in a steel

mill in Pittsburgh were to answer a questionnaire in the same way as the

first-level supervisors in a steel mill in Essen, and even more surprising if

the same answers were to come from executives in an insurance company in

Calcutta. The blue-collar workers in one steel mill in Pittsburgh might not

even answer the questionnaire in the same way as the blue-collar workers in

another steel mill in Pittsburgh if the two mills had distinctly different

histories and work cultures.

Subjective data obtained

from individual respondents at one time and through one method provide a fourth

reason to watch for confounding background relationships. By including items in

a single questionnaire or a single interview, researchers suggest to

respondents that they ought to see relationships among these items; and by

presenting the items in a logical sequence, the researchers suggest how the

items ought to relate. Only an insensitive respondent would ignore such strong

hints. Moreover, respondents have almost certainly made sense of their worlds,

even if they do not understand these worlds in some objective sense. For

instance, Lawrence and Lorsch (1967) asked managers

to describe the structures and environments of their organizations; they then

drew inferences about the relationships of organizations’ structures to their

environments. These inferences might be correct statements about relationships

that one could assess with objective measures; or they might be correct

statements about relationships that managers perceive, but managers’ perceptions

might diverge considerably from objective measures (Starbuck, 1985). Would

anyone be surprised if it turned out that managers perceive what makes sense

because it meshes into their beliefs? In fact, two studies (Tosi

et al., 1973; Downey et al., 1975) have attempted to compare

managers’ perceptions of their environments with other measures of those

environments: both studies found no consistent correlations between the

perceived and objective measures. Furthermore, Downey et al. (1977) found that managers’ perceptions of their firms’

environments correlate more strongly with the managers’ personal

characteristics than with the measurable characteristics of the environments.

As to perceptions of organization structure, Payne and Pugh (1976) compared

people’s perceptions with objective measures: they surmised (a) that the

subjective and objective measures correlate weakly; and (b) that people often

have such different perceptions of their organization that it makes no sense to

talk about shared perceptions.

Foresight is a fifth and

possibly the most important reason to anticipate confounding background

relationships. Researchers are intelligent, observant people who have

considerable life experience and who are achieving success in life. They are

likely to have sound intuitive understanding of people and of social systems;

they are many times more likely to formulate hypotheses that are consistent

with their intuitive understanding than ones that violate it; they are quite

likely to investigate correlations and differences that deviate from zero; and

they are less likely than chance would imply to observe correlations and

differences near zero. This does not mean that researchers can correctly

attribute causation or understand complex interdependencies, for these seem to

be difficult, and researchers make the same kinds of judgement,

interpretation, and attribution errors that other people make (Faust, 1984).

But prediction does not require real understanding. Foresight does suggest that

psychological differences and correlations have statistical distributions very

different from the distributions assumed in hypothesis tests. Hypothesis tests

assume no foresight.

If the differences and

correlations that psychologists test have distributions quite different from

those assumed in hypothesis tests, psychologists are using tests that assign

statistical significance to confounding background relationships. If

psychologists then equate statistical significance with meaningful

relationships, which they often do, they are mistaking confounding background

relationships for theoretically important information. One result is that

psychological research may be creating a cloud of statistically significant

differences and correlations that not only have no real meaning but that impede

scientific progress by obscuring the truly meaningful ones.

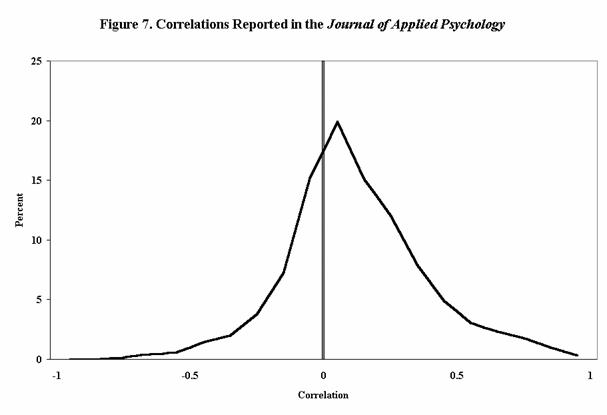

Measures

To get an estimate of the population

distribution of correlations that I/O psychologists study, we tabulated every

complete matrix of correlations that appeared in the Journal of Applied Psychology during 1983-86. This amounts to 6574

correlations from 95 articles.

We tabulated only

complete matrices of correlations in order to observe the relations among all

of the variables that I/O psychologists perceive when drawing inductive inferences,

not only those variables that psychologists actually include in hypotheses. Of

course, some studies probably gathered and analysed

data on additional variables beyond those published, and then omitted these

additional variables because they correlated very weakly with the dependent

variables. It seems well established that the variables in hypotheses are

filtered by biases against publishing insignificant results (Sterling, 1959;

Greenwald, 1975; Kerr et al., 1977).

These biases partly explain why some authors revise or create their hypotheses

after they compute correlations, and we know from personal experiences that

editors sometimes improperly ask authors to restate their hypotheses to make

them fit the data. None the less, many correlation matrices include

correlations about which no hypotheses have been stated, and some authors make

it a practice to publish the intercorrelation

matrices for all of the variables they observed, including variables having

expected correlations of zero.

To estimate the

percentage of correlations in hypotheses, we examined a stratified random

sample of 21 articles. We found it quite difficult to decide whether some

relations were or were not included in hypotheses. Nevertheless, it appeared to

us that four of these 21 intercorrelation matrices

included no hypothesized relations, that seven matrices included 29-70 per cent

hypothesized relations, and that ten matrices were made up of more than 80 per

cent hypothesized relations. Based on this sample, we estimate that 64 per cent

of the correlations in our data represented hypotheses.

Results

Figure 7 shows the observed distribution

of correlations. This distribution looks. much like the comparable ones for

Administrative Science Quarterly and the Academy of Management Journal, for

which we also have data, so the general pattern is not peculiar to I/O

psychology.

It turns out that Art

Brief was nearly right on average, for the mean correlation is .0895 and the

median correlation is .0956. The distribution seems to reflect a strong bias

against negative correlations: 69 per cent of the correlations are positive and

31 per cent are negative, so the odds are better than 2 to 1 that an observed

correlation will be positive. This strong positive bias provides quite striking

evidence that many researchers prefer positive relationships, possibly because

they find these easier to understand. To express this preference, researchers

must either be inverting scales retrospectively or be anticipating the signs of

hypothesized relationships prospectively, either of which would imply that

these studies should not use statistical tests that assume a mean correlation

of zero.

|

Table 3 – Differences

Associated with Numbers of Observations |

|||

|

|

|

|

|

|

|

N<70 |

70<N<180 |

N>180 |

|

Mean number of observations |

40 |

120 |

542 |

|

Mean correlations |

.140 |

.117 |

.064 |

|

Numbers of correlations |

1195 |

1457 |

3922 |

|

|

|

|

|

|

Percentage of

correlations are: |

|||

|

Positive |

71% |

71% |

67% |

|

Negative |

29% |

29% |

33% |

|

|

|

|

|

|

Percentage of

correlations that are statististically significant

at .05 using two tails: |

|||

|

Positive correlations |

34% |

64% |

72% |

|

Negative correlations |

18% |

41% |

56% |

|

|

|||

Studies with large numbers

of observations exhibit slightly less positive bias. Table 3 compares studies

having less than 70 observations, those with 70 to 180 observations, and those

with more than 180 observations. Studies with over 180 observations report 67

per cent positive correlations and 33 per cent negative ones, making the odds

of a positive correlation almost exactly 2 to 1. The mean correlation found in

studies with over 180 observations is .064, whereas the mean correlation in

studies with fewer than 70 observations in .140.

Figure 8 compares the

observed distributions of correlations with the distributions assumed by a

typical hypothesis test. The test distributions in Figure 8 assume random

samples equal to the mean numbers of observations for each category. Compared

to the observed distributions, the test distributions assume much higher

percentages of correlations near zero, so roughly 65 per cent of the reported

correlations are statistically significant at the 5 per cent level. The

percentages of statistically significant correlations change considerably with

numbers of observations because of the different positive biases and because of

different test distributions. For studies with more than 180 observations, 72

per cent of the positive correlations and 56 per cent of the negative

correlations are statistically significant; whereas for studies with less than

70 observations, 34 per cent of the positive correlations and only 18 per cent

of the negative correlations are statistically significant (Table 3). Thus,

positive correlations are noticeably more likely than negative ones to be

judged statistically significant.

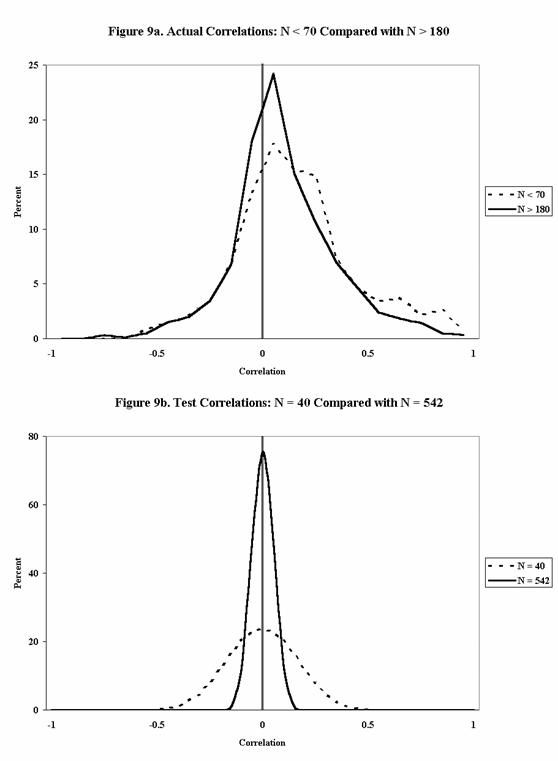

Figure 9a shows that

large-N studies and small-N studies obtain rather similar distributions of correlations.

The small-N studies do produce more correlations above + .5, and the large-N

studies report more correlations between - .2 and + .2. Both differences fit

the rationale that researchers make more observations when they are observing

correlations near zero. Some researchers undoubtedly anticipate the magnitudes

of hypothesized relationships and set out to make numbers of observations that

should produce statistical significance (Cohen, 1977); other researchers keep

adding observations until they achieve statistical significance for some

relationships; and still other researchers stop making observations when they

obtain large positive correlations. Again, graphs for Administrative Science

Quarterly and the Academy of Management Journal strongly resemble

these for the Journal of Applied Psychology.

Figure 9b graphs the

test distributions corresponding to Figure 9a. These graphs provide a reminder

that large-N studies and small-N studies differ more in the criteria used to

evaluate statistical significance than in the data they produce, and Figures 9a

and b imply that an emphasis on statistical significance amounts to an emphasis

on absolutely small correlations.

The pervasive

correlations among variables make induction undependable: starting with almost

any variable, an I/O psychologist finds it extremely easy to discover a second

variable that correlates with the first at least .1 in absolute value. In fact,

if the psychologist were to choose the second variable utterly at random, the

psychologist’s odds would be 2 to 1 of coming up with such a variable on the